- AI with Kyle

- Posts

- AI with Kyle Daily Update 186

AI with Kyle Daily Update 186

Today in AI: Anthropic's AI Fluency Course

I watched Anthropic's AI Fluency course so you don't have to.

It's a free official course called AI Fluency: Framework & Foundations. Their own view of what good AI use actually looks like in 2026. About 90 minutes of video. I sat down with a coffee, took notes, ran a highlighter through the slides, and condensed the whole thing into the deck below… plus the cheat-sheet PDF at the bottom of this email.

I want to extract the main frameworks for you. Give you the gold nuggets from the course. Absolutely take the course yourself (there’s certification too so that’s nice) but for those with less time use this guide to get the core value.

Slide deck is below. Today's PDF giveaway is the AI Fluency Cheat Sheet - the whole framework on ten pages, the 4Ds, the 3 modes, the loop, all of it.

The Big Takeaway

Most people think AI skill = better prompts.

Anthropic says AI skill = four competencies. The 4Ds:

Delegation - what should AI even do?

Description - how should I brief it? (THIS is the prompting part)

Discernment - can I trust this output?

Diligence - did I use it responsibly?

Three Ways To Use AI

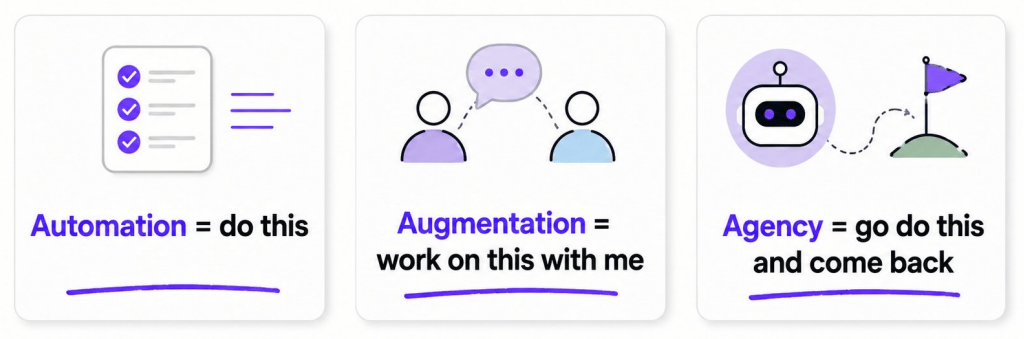

Anthropic splits AI use into three modes. This is the first framework they give us in the course:

Automation = "do this". You give AI a repetitive task. It does it. You walk away. Best for transcription, formatting, summarising long docs, repetitive boilerplate.

Augmentation = "work on this with me". You and AI are sat next to each other (metaphorically) iterating together. Best for drafting, brainstorming, problem-solving, anything where your judgment is constantly in the loop.

Agency = "go do this and come back". You delegate a multi-step outcome. Agent goes off, plans, executes, returns. Best for research, complex tasks, anything you'd otherwise hand to a junior employee.

Most people are stuck in Automation mode (do my task) and don't realise Augmentation is where 80% of the leverage lives. Agency is where the next leap will come from, and we're already seeing it with Claude Code, Codex, Hermes, OpenClaw. This is the more “modern” way of using AI in 2026 that in just 2025 was a bit…wobbly.

Quick gut check: when you opened ChatGPT or Claude this morning, which mode did you use? Probably Automation. Probably could've used Augmentation or maybe even Agency? Worth thinking about.

The 4Ds: The Framework Worth Stealing

This is the most valuable part of the course.

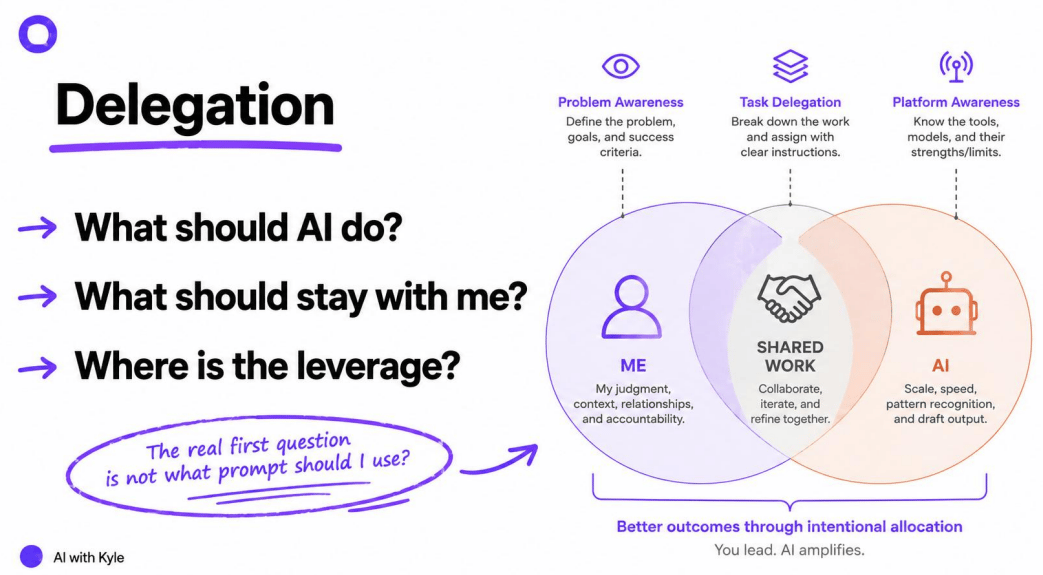

Delegation - What Should AI Do?

The first question here is not "what's a good prompt?". The real first question is "what part of this job should the AI even touch".

Anthropic uses a venn-style picture: ME, SHARED, AI. Your judgment, context, and relationships sit with you. Scale, speed, pattern recognition sits with AI. The middle is where you collaborate.

Examples:

First draft of an email? AI. (Yes.)

Final opinion on whether to send it? You. (Probably.)

A comparison table of three suppliers? AI. (Yes.)

Blindly trusting the AI in strategic decisions? No. (Never.)

Most bad AI use is not a prompt problem. It's a work allocation problem.

Previously I outlined my content production system in this rather, ahem, comprehensive diagram:

Blue are the AI processes. Orange are the human processes.

You need to decide yourself for your AI usage and workflows what to offload and what to retain. Delegation is the first job.

Description - How Should I Brief It?

This is the slot where prompting actually lives. Anthropic breaks it into Product (what do you want made), Process (how should it approach the task), Performance (how should it behave while collaborating).

My god they like their frameworks to all use the same letter huh?…

When it comes to what you actually include in your prompt they break it out into the usual prompting tactics. If you want a clean structure for this, the RISEN framework works here.

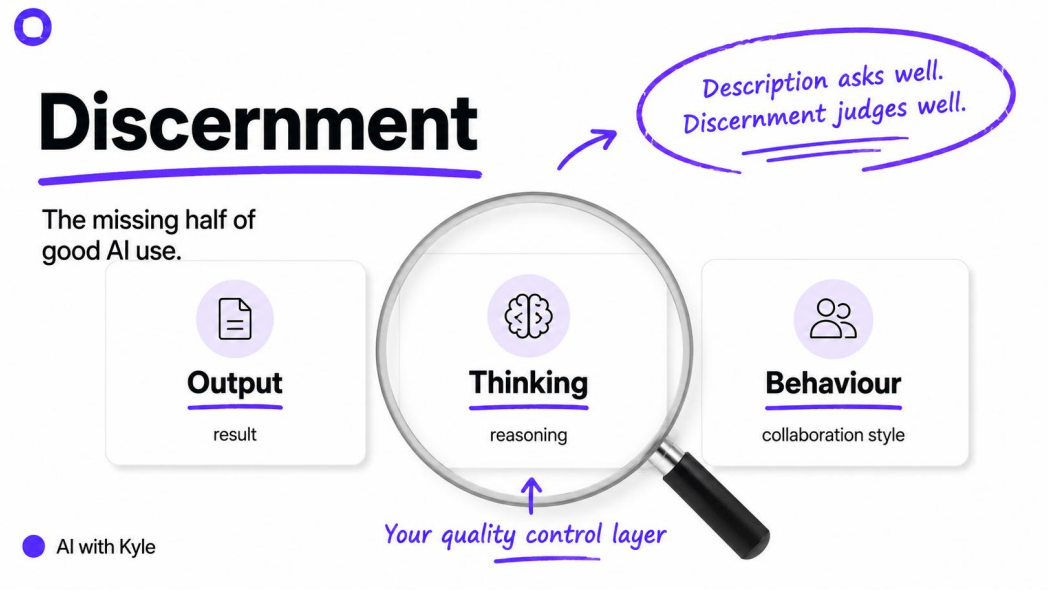

Discernment - Can I Trust This Output?

The missing half of how most people use AI. Description asks well. Discernment judges well. You (the human!) critically evaluate three things: the output, the thinking, the behaviour.

Remember that AI fails smoothly. Polished does not mean right. Confidence does not mean competence - shit, AI tends to be more confident when it is hallucinating!

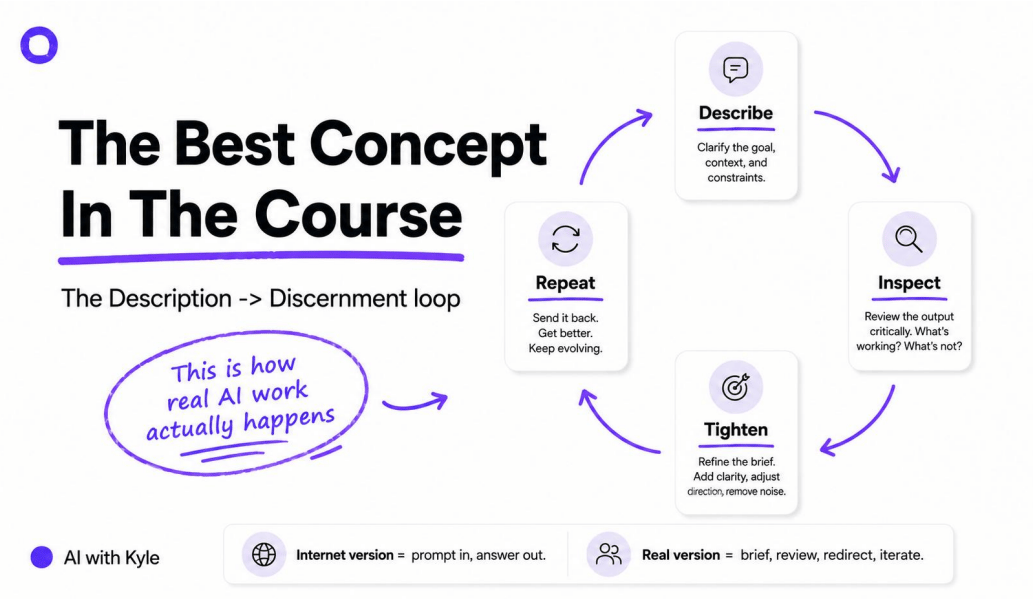

You need to use both Describe (Input) and Discern (Output) and check the difference. There’s a loop here:

This is the part most people mess up on honestly. They create something with AI. It looks pretty good. Cool, ship it.

Don’t do that.

READ it. Check it. Tighten up anything that is incorrect. Repeat this until the quality is where it needs to be!

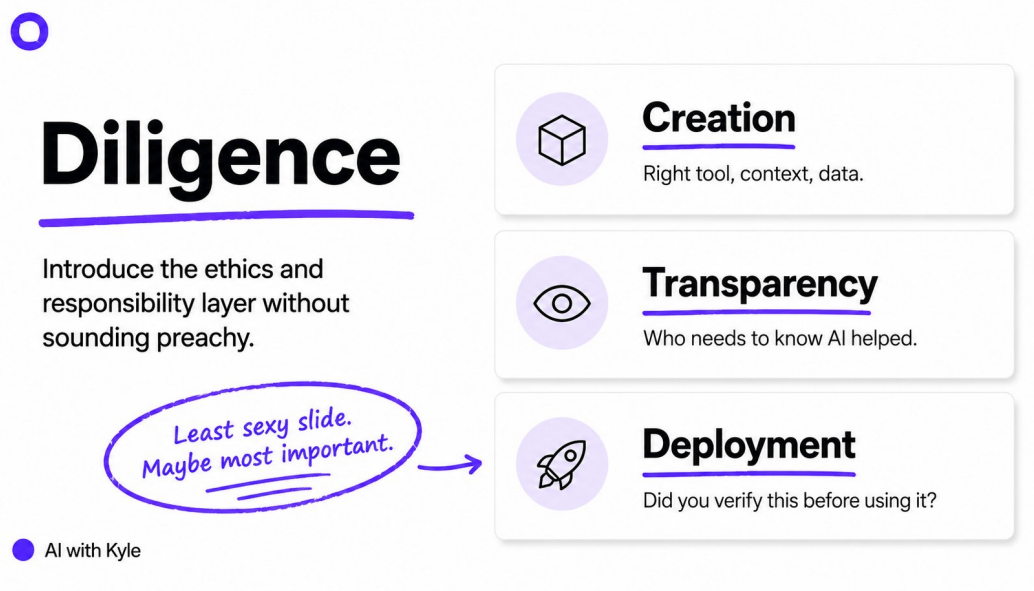

Diligence - Did I Use It Responsibly?

This last step is very Anthropic…a bit dull, not at all sexy, but important.

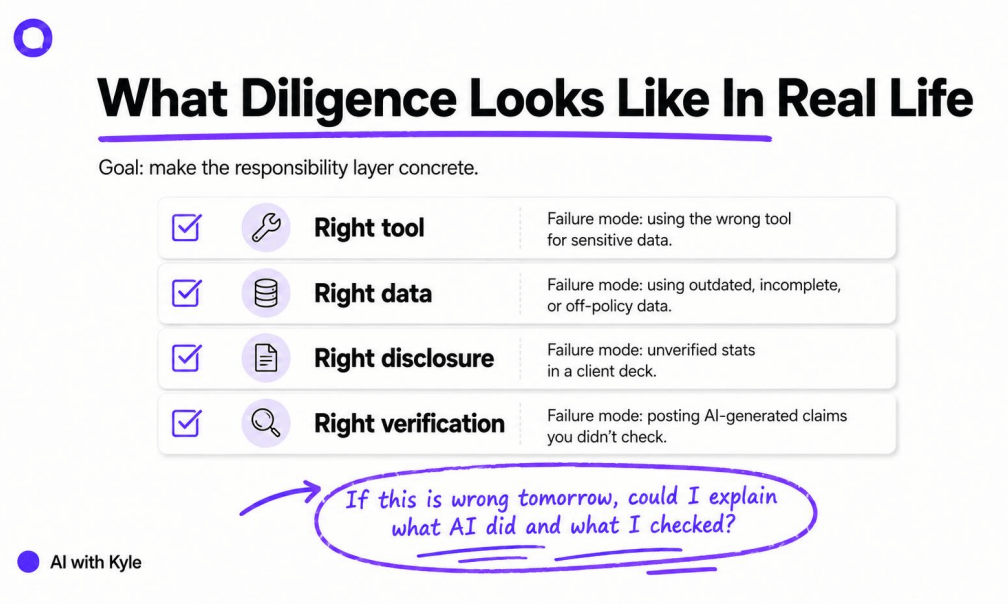

This is the ethical layer. You need to make sure that you’ve been responsible in your usage of the AI. Did you use the right tool, right data? Transparency? Verification?

Here’s a quick checklist to make this actionable:

Anthropic succinctly put this as “If this is wrong tomorrow, could I explain what AI did and what I checked?”. Did you at least put in the due diligence here or were you lazy? Can you cover your arse?

If the answer is no… you skipped Diligence. Don't ship it. Do the work properly. Lazy-bones.

What Anthropic Got Right

Three things:

Judgment not hacks. The whole course teaches you how to think, not what to prompt.

Collaboration not magic. AI is positioned as a partner, not a replacement. Augment yourself with AI rather than purely automating - use AI in different modes as needed.

Human expertise still matters. Your experience is the edge. You need this for the discernment step. Otherwise your output will be crap and you won’t know it is.

It's a more grown-up version of AI literacy than the pure prompt-engineering content dominating LinkedIn and the like. Here’s the full course from Anthropic: https://anthropic.skilljar.com/ai-fluency-framework-foundations

Want to get the presentation + summary cheat sheet? Available here https://aiwithkyle.com/resources/ai-fluency

Kyle