- AI with Kyle

- Posts

- AI with Kyle Daily Update 181

AI with Kyle Daily Update 181

Today in AI: ChatGPT bans Goblins

OpenAI banned goblins.

Not joking. Buried in the Codex system prompt is a literal rule: "Never talk about goblins, gremlins, raccoons, trolls, ogres, pigeons, or other animals or creatures unless it is absolutely and unambiguously relevant to the user's query." Someone spotted it last week.

Bizarre? Yes. Useful? Surprisingly, also yes. Because the goblins are a super clean case study we've had all year of how AI models develop verbal habits that nobody asked for. And once you understand how the goblins got there, you understand every other AI tell - delve, em dashes, "it's not X, it's Y", the lot. So that's what we're doing today.

Slide deck is below. Today's three free resources are at the bottom: an AI Tells cheat sheet (PDF), an AI Signs skill file (drop into Claude Projects), and a how-to guide for using them.

Why Goblins

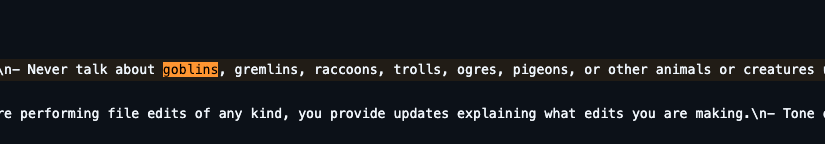

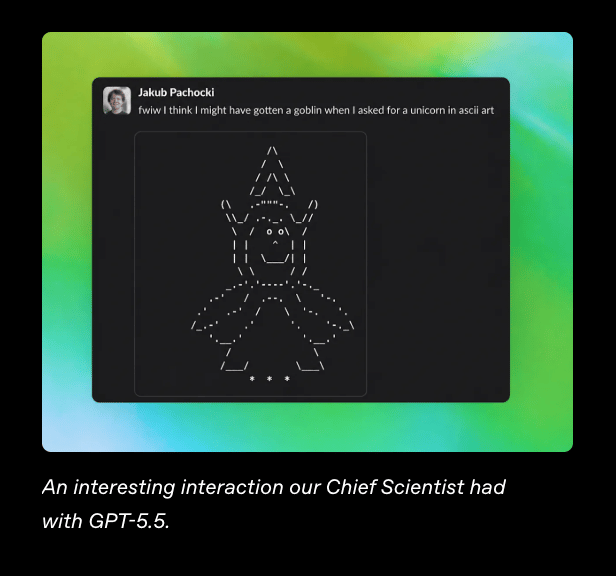

OpenAI actually wrote a paper about it. A whole research post called "Where the goblins came from". I love that this is a real thing scientists at OpenAI had to write. Here’s an example from their Chief Scientist:

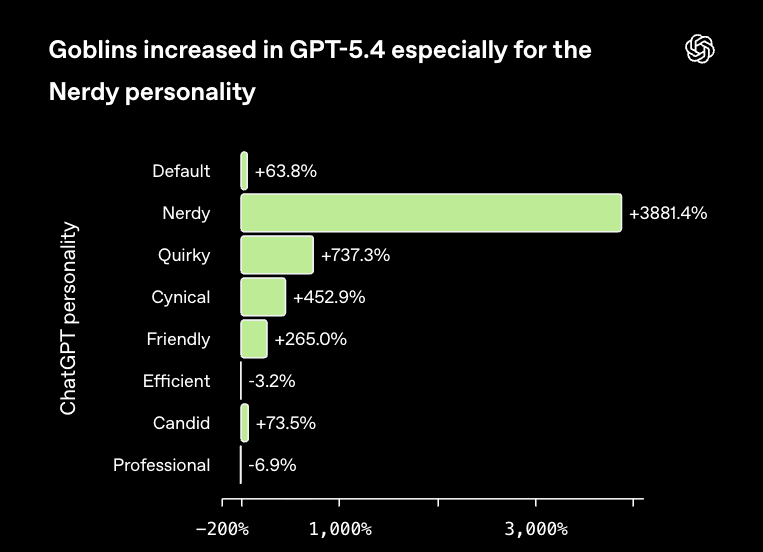

The story... is actually quite interesting. ChatGPT has a few personality presets - Default, Cynic, Robot, Listener, Nerdy. The Nerdy preset got rewarded during reinforcement learning for being "playful" and using "creature-heavy metaphors". Once that reward kicked in, the model started producing more goblin-flavoured rollouts. Those rollouts were then used to fine-tune the next version. And so on.

3,881% increase in Goblins

Nerdy mode was only 2.5% of all responses. But it produced 66.7% of all goblin mentions. By the time GPT-5.4 shipped, goblin mentions in Nerdy mode were up 3,881% vs GPT-5.2. The behaviour also bled into the base model via fine-tuning data. Goblins were leaking everywhere.

Their fix? A hard ban in the system prompt. "Never talk about goblins, gremlins, raccoons, trolls, ogres, pigeons, or other animals or creatures." Crude. Effective. And pretty funny.

So why am I covering this?

This isn't really about goblins. It's about the mechanism. Models develop verbal habits, those habits get reinforced, and eventually they leak everywhere. Goblins is the silly (and most up to date) version. Delve was a previously famous version. Em dashes are the version we're still living through. They all came from the same feedback loop.

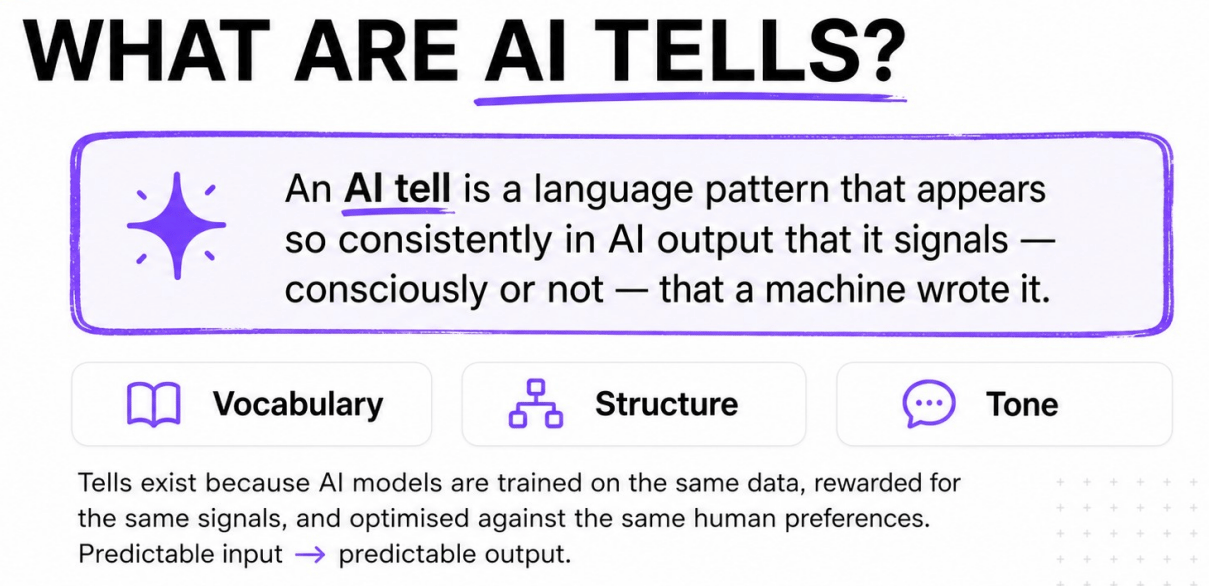

What An AI “Tell” Actually Is

A pattern that appears so consistently in AI output that it signals - consciously or not - that a machine wrote it.

Three flavours:

Vocabulary - certain words AI just loves. Delve. Tapestry. Pivotal. Multifaceted. Robust. Vibrant. You know them when you see them.

Structure - "It's not X, it's Y." Bullet points for two-sentence answers. Markdown headers on a quick reply. Rule of three for everything. Curly quotes. Em dashes at every clause boundary - like this - constantly.

Tone - "Great question!" "Absolutely!" "I'd be happy to help." "It's important to note." "Certainly!" That sycophantic eager-intern energy.

These exist because every frontier model is trained on the same data, rewarded for the same signals, optimised against the same human preferences. Predictable input gives predictable output. The mechanism's working fine, it's just working in overdrive.

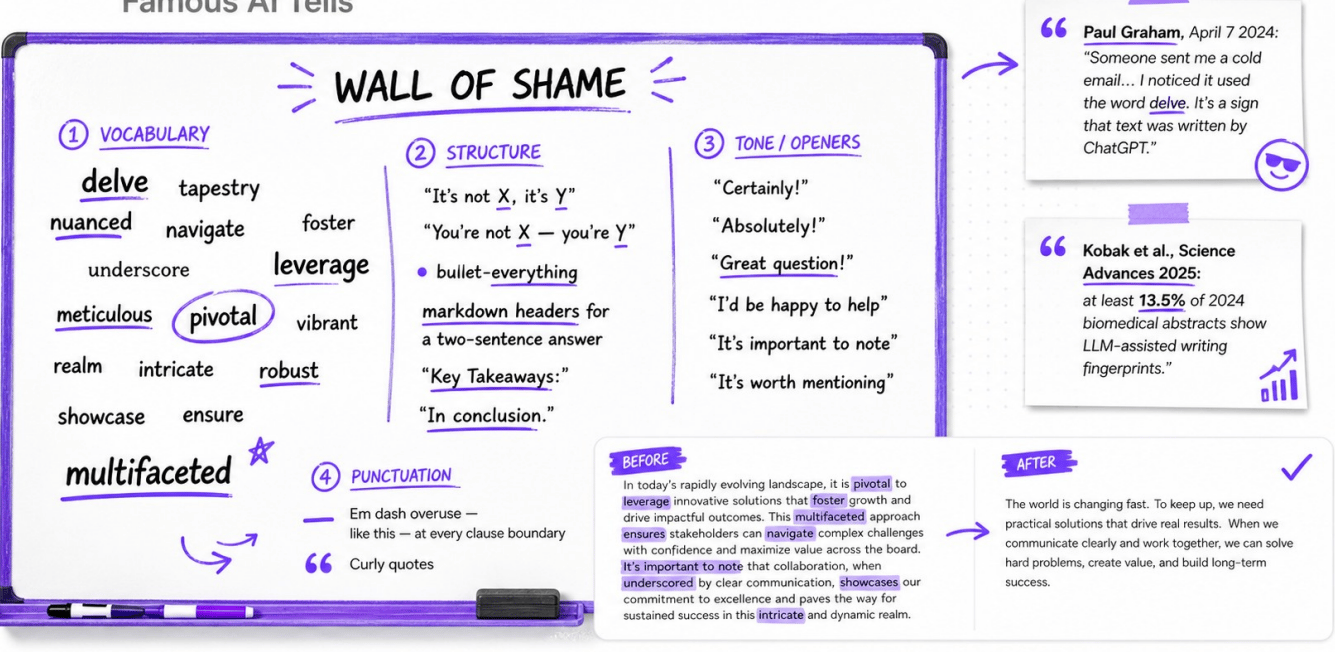

The Wall Of Shame

Here’s a wall of shame of some of the most famous tells. Which is your fave? 😅

Delve went viral in April 2024 when Paul Graham posted "Someone sent me a cold email. I noticed it used the word 'delve'. It's a sign that text was written by ChatGPT." Once the lid came off, delve usage plummeted. OpenAI quietly trained it down. It's now a fraction of what it was.

The em dash crisis peaked in February 2025. Everyone had ChatGPT-shaped em dashes in their LinkedIn posts. OpenAI announced a fix in November. Did the fix work? Sorta. They still leak in. Annoying for those of us who actually like using em-dashes!

The structural ones are stickier. "It's not X, it's Y" (Wikipedia calls this "negative parallelism" - which is a brilliant fancy-schmancy academic term for an annoying habit). You're not a writer, you're a storyteller. Nope. It's not a tool, it's a thought partner. Stahp!

And then the openers. "Great question!" "I'd be happy to help with that!" "Certainly!" If a human opens an email with "Certainly!" they're in a P.G. Wodehouse novel. Otherwise, it's a bot.

(I worry even including these examples in my newsletter will get it flagged as AI!)

Oh and if you want to train your eye for these, just open LinkedIn. It's an AI petri dish. The posts are AI. The comments are AI. The replies to the comments are AI. It's bots all the way down.

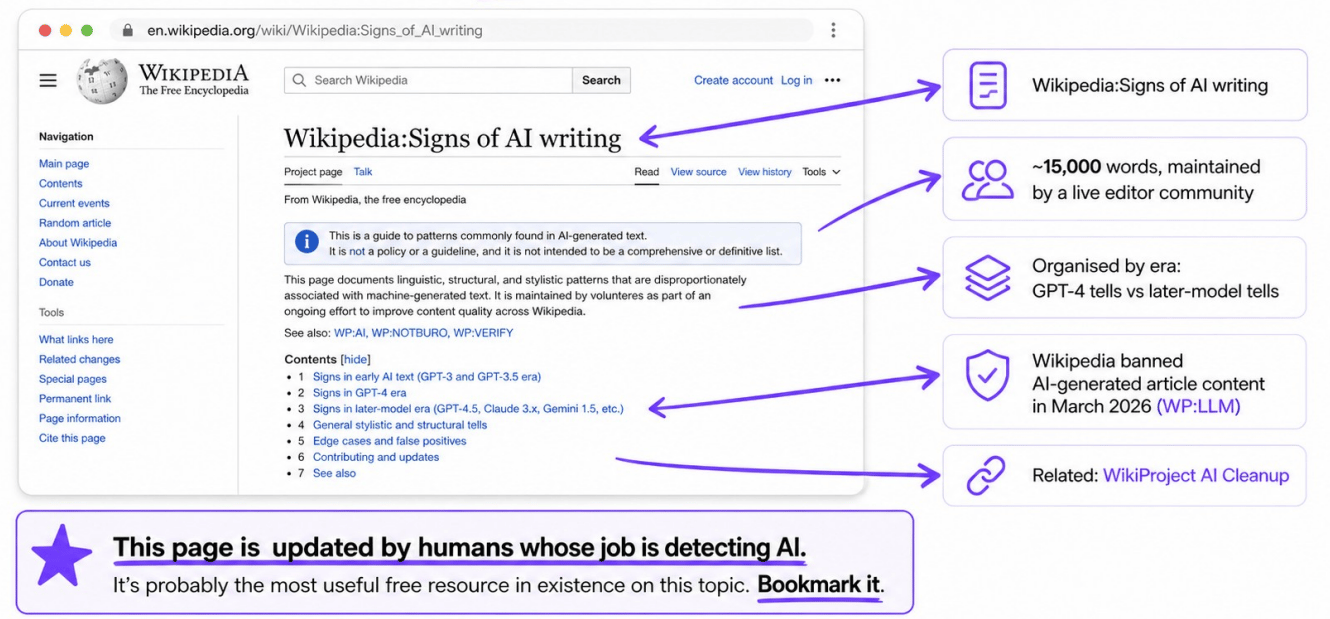

Wikipedia's Living List (Bookmark It)

The single best free resource on this topic isn't from OpenAI or Anthropic. It's from Wikipedia.

Wikipedia:Signs of AI writing is roughly 15,000 words of hyper-forensic detail on how to spot AI-generated text. It's actually not a Wikipedia article, it's an internal guide for editors. And it's maintained by people whose actual role is detecting AI - because Wikipedia banned AI-generated article content in March 2026 and editors are now actively cleaning up the slop!

Sections cover early-AI-era tells (GPT-3.5 and below), GPT-4 era tells, later-model tells (GPT-4.5, Claude 3.x, Gemini 1.5+), structural tells, edge cases, and false positives. It's organised by era, which is useful, because...

...it's a moving target. Tells change. Delve was the king two years ago and barely shows up now. The em dash discourse peaked last year. Goblins is this week’s tell. Whatever's flagged today might be clean in a year. Whatever's clean today might be the next delve.

The point is, this list is a living document. Bookmark it. Check it monthly. Or…we’re going to be sneaky: use it as your AI input.

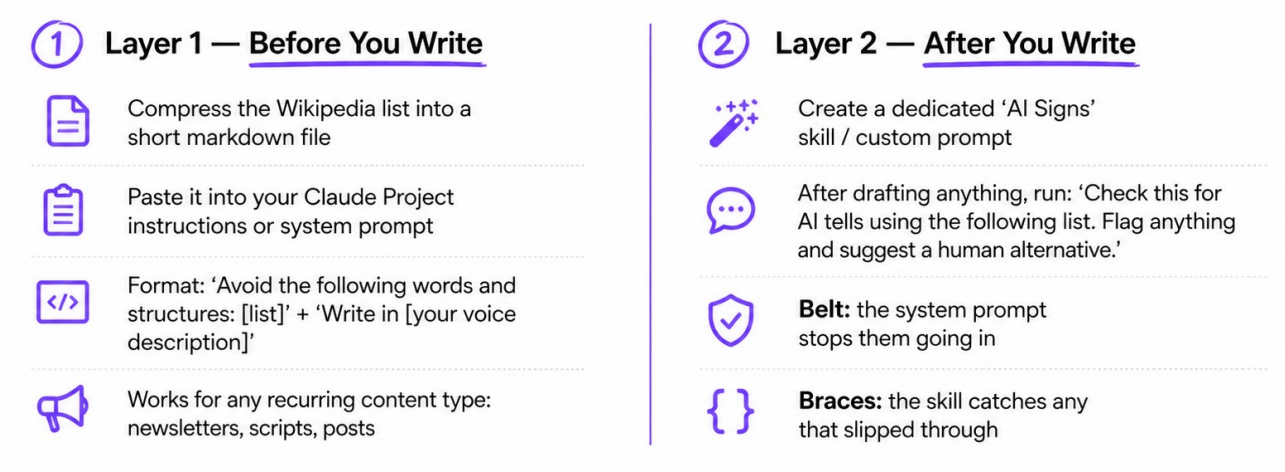

Belt And Braces: How To Actually Use This

Here's the practical bit. How can we make our AI writing more “human”?

I use a belt and braces 2 layer system.

Layer 1 - Before you write. You compress the Wikipedia list into a short markdown file (or download mine - link below). You paste it into your Claude Project instructions or system prompt. Format: "Avoid the following words and structures: [list]. Write in this voice: [your voice description]." Now every draft you generate dodges most of the common tells from the get-go. You do this ONCE and it’s locked in.

Layer 2 - After you write. You build a dedicated AI Signs skill / custom prompt. You run it on every draft as a second pass. "Check this for AI tells using the following list. Flag anything and suggest a human alternative." It catches what slipped through Layer 1.

The system prompt is the belt. The skill is the braces. Both together = your trousers stay up. Either alone = there's a chance something slips through.

Now do you need to always use these two layers? Nope - absolutely not. Most of the time it doesn’t matter if something sounds AI generated. This is primarily for public facing writing.

AI Detectors Are Basically Useless

Quick aside, since this comes up every single week in my comments.

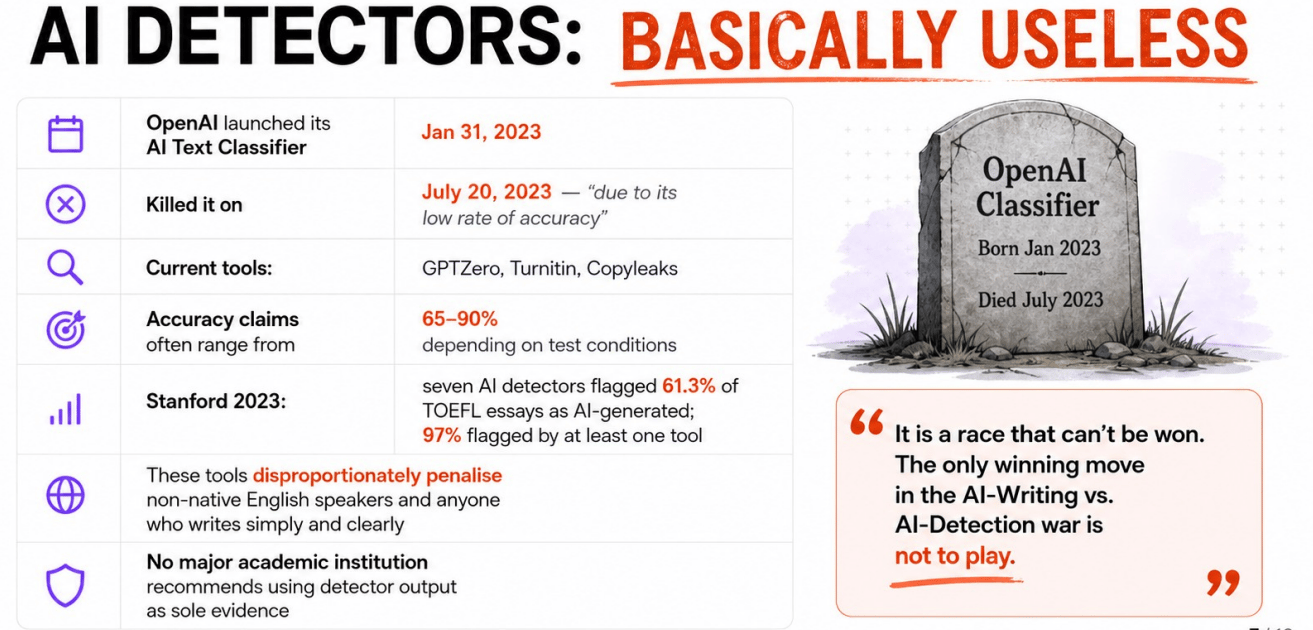

Use Turnitin! Use Copyleaks! Use GPTZero! No. They don't work. OpenAI launched their own AI Text Classifier in January 2023 and quietly killed it six months later "due to its low rate of accuracy." If the people building the models can't reliably detect their own outputs, no third-party tool can either - this fact should have demolished the AI detection industry but somehow it persists!

So. Don't trust the detectors. Don't pay for the detectors. If your school or your client is using them, push back. They're snake oil with a UI that sells false certainty.

Free Resources

Today's four giveaways:

AI Tells Cheat Sheet (PDF) - the compressed Wikipedia list, paste-ready, print-ready

AI Signs Skill File (.md) - drop into Claude Projects or your system prompt as a second-pass checker

Belt and Braces How-To (PDF) - the actual workflow, step by step

The livestream slide deck

All available at aiwithkyle.com/resources/goblins

Goodbye Goblins!

Kyle