- AI with Kyle

- Posts

- AI with Kyle Daily Update 157

AI with Kyle Daily Update 157

Today in AI: Moving to Claude

Moving to Claude: The No-BS Migration Guide

About 900 million people use ChatGPT. It's become the Google of AI - the thing everyone defaults to.

Buuut over the last few weeks, somewhere between 2.5 and 3 million people have quietly moved over to Claude. Some for political reasons. Some because they tried it and realised it's better for actual work. TechRadar even quoted me on how tricky the transition is for most people - go give the post some love so they keep asking me for my (supposedly expert..) opinion! AI with Kyle talks to TechRadar.

Here's the problem. Using Claude with ChatGPT habits is like switching from Excel to Photoshop and looking for spreadsheets. They're both software, but they're built for fundamentally different things.

So today I'm giving you the full migration guide. Step by step. I also made a PDF slide deck you can download (link at the bottom).

Why Claude Feels Different

ChatGPT is trained to please you. Claude is trained to give you the truth.

This sounds small. It's not.

ChatGPT uses RLHF - reinforcement learning from human feedback. It learns that agreeable answers get positive ratings. So it agrees with you. Hypes your ideas. Strings you along with teaser responses like "now here's the REAL secret..." instead of just telling you the answer. Feels nice…but not helpful.

Claude uses something called Constitutional AI. It checks its responses against a set of core principles. If your idea has a flaw, it'll tell you. If your business plan doesn't make sense, it pushes back. It’ll straight up call your BS out.

That's not a bug. It's a feature. But some people hate it.

I personally use both. ChatGPT for personal stuff - travel planning, meal ideas, quick lookups. Claude for anything that involves “real” work. Writing, strategy, building, coding.

Claude also doesn’t “do” much beyond text. It doesn’t make images or videos. It’s voice mode is a bit crap. It’s extremely focused on text. Which means it’s just not useful for some tasks.

Pricing

Three models. Think of them as tiers:

Opus 4.6 - The big brain. For heavy, complex, agentic work. Burns through your usage limits fast.

Sonnet 4.6 - The daily driver. Best reasoning and writing model at any price. This is what your $20/month gets you, and honestly, it's better than anything ChatGPT offers right now.

Haiku 4.5 - Fast and cheap. Best for simple, high-speed tasks. If you're on the API, this saves you money. Otherwise not much reason to use it.

The numbers 4.5, 4.6 etc. aren’t important here. Just remember Opus, Sonnet and Haiku and you’ll be fine.

One of the BIG problems with Claude is that $20/month isn't enough for heavy Opus usage. You’ll prompt a handful of times and be told you’ve hit usage limits and to come back in an hour. Or sometimes in a day!

At $20/month you are basically using Sonnet (the mid-model). Opus is pretty much off the table. Now if you're using Claude for business, $100-200/month for MAX is a no-brainer. If it's just personal use, it's probably too expensive.

And for free users? ChatGPT's free tier is way more generous. They are aiming at a broad market and are fine with free users (especially as advertising is on its way in…). Anthropic don’t want free users. So their free plan is pretty stingy.

Claude Doesn't Know You (Yet)

ChatGPT has had persistent memory since Feb 2024. The Stone Age in modern AI timelines…

It knows your name, your job, your preferences. If you’ve been using it since then ChatGPT has built up a record of who you are.

Claude only added persistent memory a few weeks ago…

And if you are moving over to Claude it’s not going to know shit about you.

This is annoying because it means you need to add context for everything. You need to be explicit about everything in your work and life to get the best results from Claude.

But…good news - Claude released a memory import feature. Deliberately timed for the ChatGPT exodus. Smart move from Anthropic honestly.

How to import: Settings > Capabilities > Import Memory. It gives you a prompt to paste into ChatGPT. ChatGPT exports your memory. You paste the output back into Claude. Takes about 2 minutes.

Do this first.

Workspace Superpowers

Two features most people miss:

Extended Thinking. For bigger queries turn this on. Claude shows you its reasoning step by step. It's like watching someone think through a problem before answering. On hard reasoning tasks, it can improve output by up to 54%. Whatever that means - I swear they make all this up.

Combine that with the heaviest model (Opus 4.6) and research mode and Claude becomes a BEAST. I ran an overnight research task on Opus with extended thinking last night. It took 30-40 minutes. Hundreds of sources. It came back with a full brand positioning report. Identified a gap in the market for an independent daily AI briefing with no sponsorships. Its insights are on point.

Artifacts. Interactive documents, mini apps, dashboards, data visualisations. Claude generates them right in the chat window. No copy-pasting required. ChatGPT just added something similar with "Blocks" but Claude's had this for a while. The mini apps in particular are great if you want to rapidly prototype a tool idea.

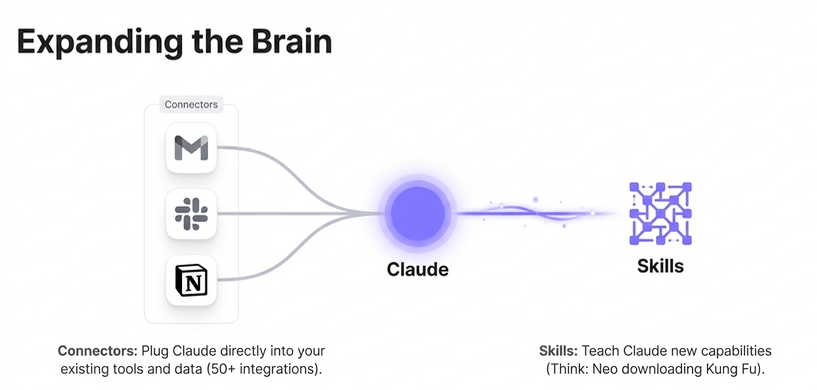

Expanding the Brain

Connectors plug Claude directly into your existing tools - Gmail, Google Drive, Notion, Slack, GitHub, Figma, Supabase, and 50+ others. This lets your Claude read from these tools and make/edit files, designs, databases and more. You can also build your own connectors if one doesn’t exist. But it probably does!

Skills are the one that gets me excited. Think Neo downloading Kung Fu. You can teach Claude new capabilities instantly. Download pre-made skills, write custom ones, or ask Claude to create a skill from a conversation. I use these heavily in Claude Code and I'll be doing a full presentation on them soon.

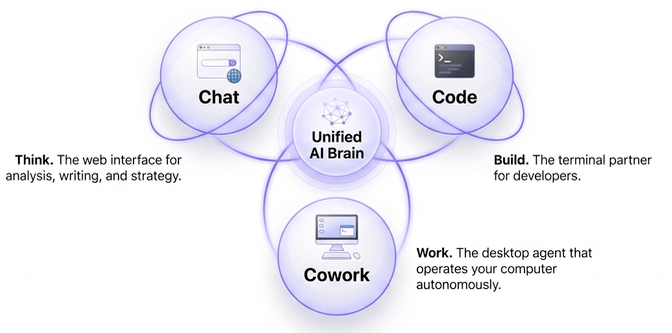

Chat, Code, Cowork

This is where it gets interesting. And also consfusing - mainly because they named everything starting with C. Claude isn't just a chatbot. It's three tools:

Simplest way to remember them:

Claude Chat - for chat.

Claude Cowork - for work.

Claude Code - for building.

The problem is that they all sorta kinda overlap and encroach on each other. It’s confusing!

Here's the gap right now - Chat, Code, and Cowork don't share memory. They don't talk to each other. I built my own workaround using GitHub as a shared brain. You can do something similar but honestly it’s a faff. I expect Anthropic to fix this natively this year. When they do, it'll be very powerful.

If you want to learn the ecosystem start with Chat. Then Cowork. Then Code. That’s simplest to most complex.

Here’s a full rundown of this guide in a PDF:

IN THE NEWS

Anthropic Published an AI Jobs Report - And It's More Nuanced Than You Think

Anthropic released a chart showing theoretical vs observed AI coverage by industry. Full report below. White collar, computer-based jobs show 90-95% theoretical AI coverage. Blue collar, physical jobs - construction, agriculture, transportation - show the lowest.

Three things worth flagging:

First - the chart only covers LLMs. It completely ignores robotics and autonomous vehicles which aren’t LLM based. Basically this chart doesn’t show all AI, just LLMs. Oh, as an aside saw my first Waymo in London recently. Very cool! Transportation will have similarly high exposure from AI - just not LLMs.

Second - the "jagged frontier" is real. Ethan Mollick's concept. Some people see 10x productivity gains from AI. Others in different sectors think AI is overhyped because it's not good at their specific work yet. Both are right. Progress is uneven and that's confusing everyone. The chart shows this in its red areas.

Third - those theoretical numbers are speculation. The blue "theoretical coverage" outline looks impressive but nobody actually knows. They've got lots of PhDs at Anthropic and they’ve put together this serious sounding report. But they still don't know. Predictions from even a year ago have been wrong. Don't treat this chart as gospel. We’ll likely be looking back at it and laughing.

Kyle's take: The useful signal here is that computer-based, white collar work is being transformed RIGHT NOW. Not in five years. Now. If your job involves a screen and a keyboard, you need to be experimenting (and building) with AI today. Not tomorrow. Today.

Here’s the full report from Anthropic.

The NYT Ran a Blind Taste Test: Human vs AI Writing. The Results Are Uncomfortable.

Kevin Roose at the New York Times created a quiz. Read two passages. Pick which one you prefer. One is written by a human. One by Claude Opus 4.5. Over 86,000 people have taken it.

I took three rounds live on stream today.

Literary fiction - I preferred the human version (it was Cormac McCarthy). But 57% of readers preferred the AI version.

Fantasy - I preferred what I thought was the human passage. It was the AI. The human was Ursula K. Le Guin. Should've recognised it. Didn't. Sorry Ursula….

Science writing - I read the passage and immediately thought “That’s Carl Sagan”. It was not. It was Claude Opus.

The other passage was Carl Sagan…

67% of readers (including me) also chose the AI passage over actual Carl Sagan…

Kyle's take: Pretty unsettling. The reality is that AI can now write content that two-thirds of people prefer, and it does it a hundred to a thousand times faster. You can have opinions about whether that's good or bad. I have plenty. But that IS the reality of the situation right now. Pretending otherwise doesn't help anyone.

Source: NYT

Want the full unfiltered discussion? Today I'm doing an Introduction to Claude Code - the terminal tool that lets you build actual apps. Come with questions. Join me for the daily AI news livestream where we dig into everything live.

Keep PROMPTING!

Kyle

Streaming on YouTube (with full 4k screen share) and TikTok (follow and turn on notifications for Live Notification).